Do the Data Speak for Themselves? A Bayesian Analysis of a Labor Law Case

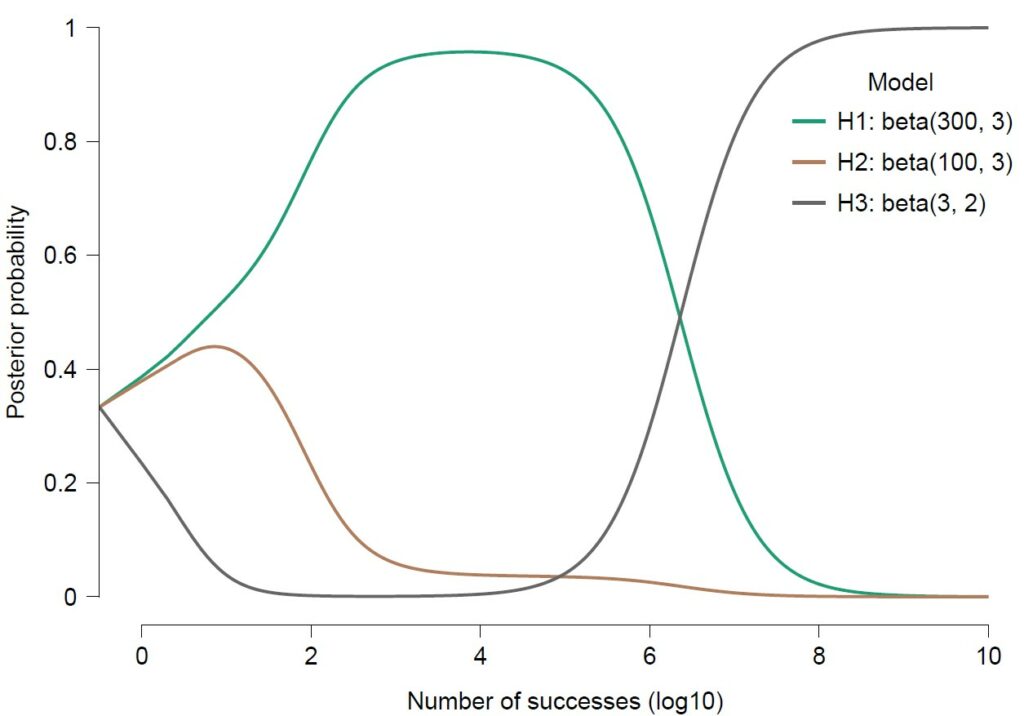

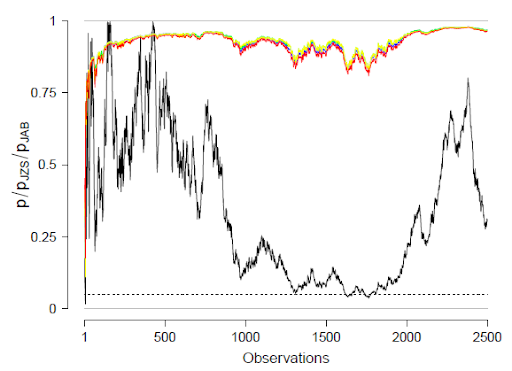

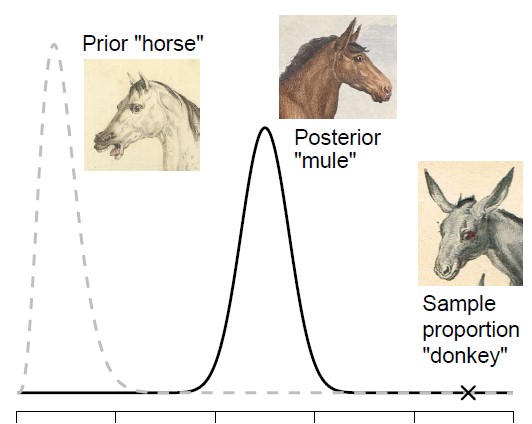

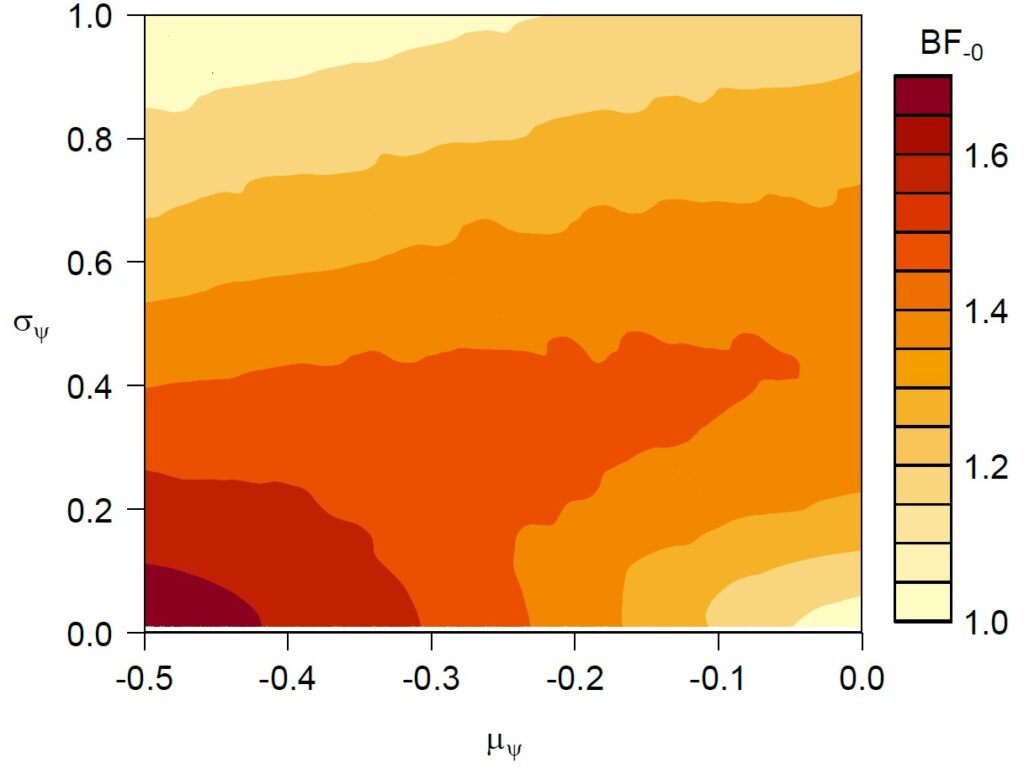

Here we present a Bayesian analysis of data from a recent labor law case [cc; see also Hummel, 2024]. In the case, several trade unions argued that the Dutch Supreme Court had issued particular judgments (i.e., the “Enerco” judgment from 2014 and the “Amsta” judgment from 2015) that effectively imply legal restrictions on collective actions. The trade unions argue that…

read more