Why Academics Should Use AI for Writing: A Case Study

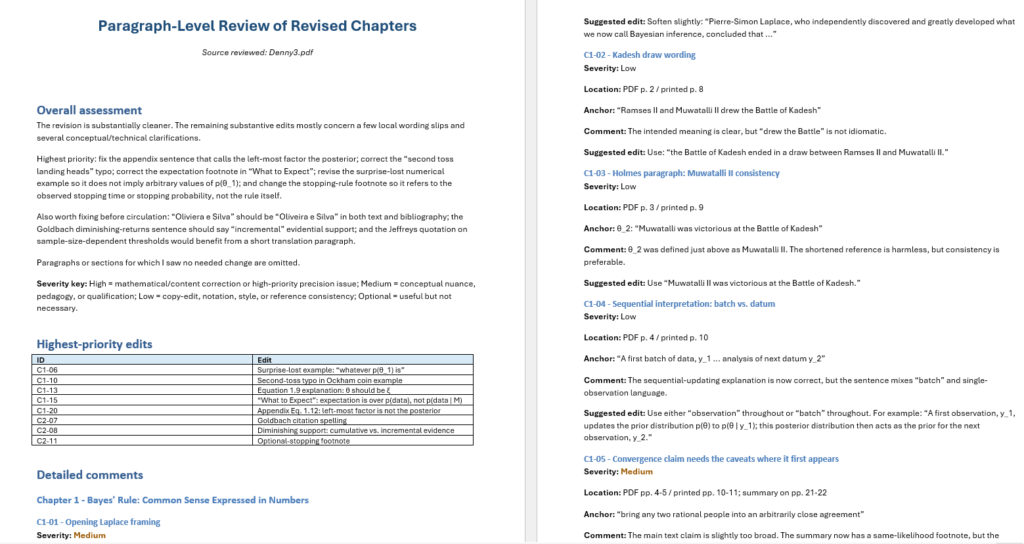

LT;DR In the current era of AI, there is no longer any excuse for manuscripts that contain typographical errors, grammatical errors, unclear formulations, errors in equations, incorrect references, missing references, and a demonstrated lack of awareness for related work. A recent example of my own writing demonstrates that an AI (i.e., ChatGPT Pro) can offer high-quality feedback. Incorporating two rounds…

read more