An often voiced concern about p-value null hypothesis testing is that p-values cannot be used to quantify evidence in favor of the point null hypothesis. This is particularly worrisome if you conduct a replication study, if you perform an assumption check, if you hope to show empirical support for a theory that posits an invariance, or if you wish to argue that the data show “evidence of absence” instead of “absence of evidence”.

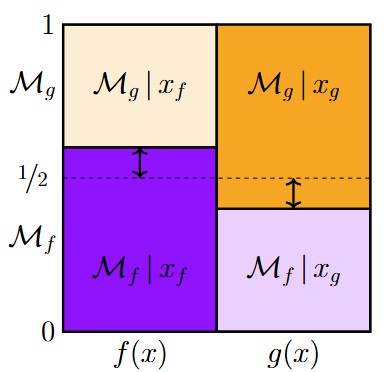

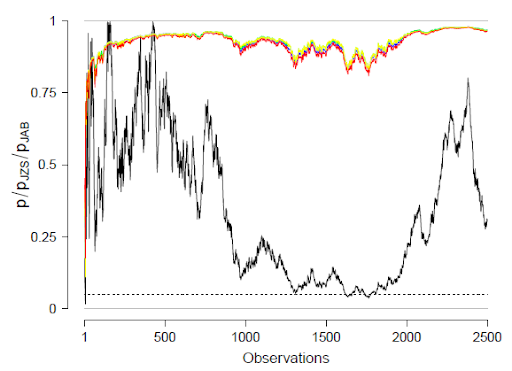

Researchers interested in quantifying evidence in favor of the point null hypothesis can of course turn to the Bayes factor, which compares predictive performance of any two rival models. Crucially, the null hypothesis does not receive a special status — from the Bayes factor perspective, the null hypothesis is just another data-predicting device whose relative accuracy can be determined from the observed data. However, Bayes factors are not for everyone. Because Bayes factors assess predictive performance, they depend on the specification of prior distributions. Detractors argue that if these prior distributions are manifestly silly or if one is unable to specify a model such that it makes predictions that are even remotely plausible, then the Bayes factor is a suboptimal tool. But what are the concrete alternatives to Bayes factors when it comes to quantifying evidence in favor of a point null hypothesis?

It is immediately clear that neither interval estimation methods nor equivalence tests, nor the Bayesian “ROPE” can offer any solace, because these methods do not take the point null hypothesis seriously; their starting assumption is that the point null hypothesis is false. Even when the point null is changed to Tukey’s “perinull”, these methods are generally poorly equipped to quantify evidence. To see this, imagine we have a binomial test against chance, and we observe 52 successes out of 100 attempts. Surely this is evidence in favor of the point null hypothesis. But how much exactly? Evidence is that which changes our opinion — how much does observing 52 successes out of 100 attempts bolster our confidence in the point null? ROPE, equivalence tests, and interval estimation methods cannot answer this question.

Also problematic are Bayesian methods that depend on the alternative hypothesis having advance access to the data, since such advance access allows the alternative hypothesis to mimic the point null, creating a non-diagnostic test in case the data are consistent with the point null. Should we despair? Are researchers who wish to quantify evidence in favor of a point null hypothesis doomed to compute a Bayes factor by specifying a concrete alternative hypothesis and assigning a point-mass to the null? In a recent paper I outline all of the known alternatives to the Bayes factor and discuss their pros and cons. The ultimate goal is to provide the practitioner with a better impression of the different statistical tools that are available to quantify evidence in favor of a point null hypothesis. A preprint is available here.

References

Wagenmakers, E-J. (2019). A comprehensive overview of methods to quantify evidence in favor of a point null hypothesis: Alternatives to the Bayes factor. Preprint.

About The Author