Tl;dr In 1847, Augustus De Morgan suggested that researchers could avoid overselling their work if, every time they made a key claim, they reminded the reader (and themselves) of how confident they were in making that claim. In 1971, Eric Minturn went further and proposed that such confidence could be expressed as a wager, with beneficial side-effects: “Replication would be encouraged. Graduate students would have a new source of money. Hypocrisy would be unmasked.”

The main principles of Open Science are modest: “don’t hide stuff” and “be completely honest”. Indeed, these principles are so fundamental that the term “Open Science” should be considered a pleonasm: openness is a defining characteristic of science, without which peers cannot properly judge the validity of the claims that are presented.

Unfortunately, in actual research practice, there are papers and careers on the line, making it difficult even for well-intentioned researchers to display the kind of scientific integrity that could very well torpedo their academic future. In other words, even though most if not all researchers will agree that it is crucial to be honest, it is not clear how such honesty can be expected, encouraged, and accepted.

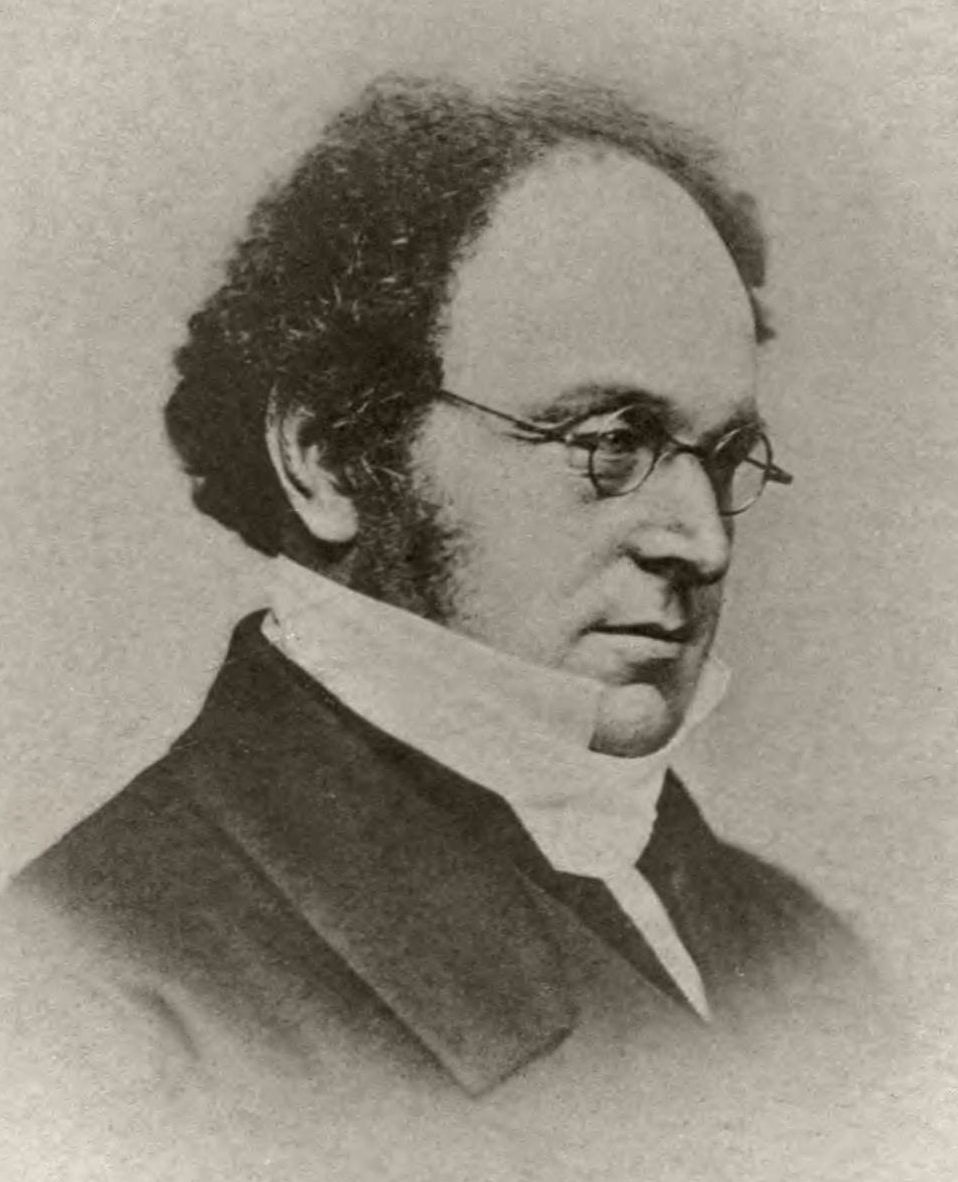

One suggestion to enforce honesty comes from Augustus de Morgan (1806–1871), a British logician and early Bayesian probabilist who popularized the work of Pierre-Simon Laplace (for details see Zabell, 2012, referenced below). In his 1847 book “Formal Logic: The Calculus of Inference, Necessary and Probable”, De Morgan promotes the use of probability theory to update knowledge concerning parameters and models, or, more generally, propositions. He foresees the problem of uncertainty allergy (“black-and-white thinking”) and presents a possible cure:

“The forms of language by which we endeavour to express different degrees of probability are easily interchanged; so that, without intentional dishonesty (but not always) the proposition may be made to slide out of one degree into another. I am satisfied that many writers would shrink from setting down, in the margin, each time they make a certain assertion, the numerical degree of probability with which they think they are justified in presenting it. Very often it happens that a conclusion produced from a balance of arguments, and first presented with the appearance of confidence which might be represented by a claim of such odds as four to one in its favour, is afterwards used as if it were a moral certainty. The writer who thus proceeds, would not do so if he were required to write

in the margin every time he uses that conclusion. This would prevent his falling into the error in which his partisan readers are generally sure to be more than ready to go with him, namely, turning all balances for, into demonstration, and all balances against, into evidence of impossibility.” (De Morgan, 1847/2003, p. 275)

Little has changed in the past 171 years; if anything, it appears that things are now worse. In our experience, authors often draw strong conclusions from weak evidence even in the results section, where one may encounter statements such as “As expected, the three-way interaction was significant, p<.05”, or “We found the effect, p=.031”. Perhaps the strong confidence expressed in results sections arises in part from the use of Neyman-Pearson null-hypothesis testing, which is based on the idea that a researcher would want to make binary accept-reject decisions, much like a judge or a jury finding a defendant guilty or innocent. The in-between option “I am unsure” is simply not available as a legitimate conclusion. Now, there are situations where one needs to make binary decisions (“do I conduct another experiment, yes or no?”; “do I pursue this research idea further, yes or no?”), but, crucially, the knowledge and conviction that underlies the decision remains inherently graded, something that is easily forgotten. Juries, doctors, plumbers, and the occasional researcher: they all have to make all-or-none decisions, and –despite what they may say– they are never 100% sure. More accurately: they should never be 100% sure (in Bayesian statistics, this is known as Cromwell’s rule).

For instance, judge Johnson might send Igor Igorevich to jail for five years for stealing corpses from a morgue, but how certain is she that Igor is really guilty? What if the rules were such that, if the verdict were later found to be wrong, the judge would have to serve time herself? (granted, this would lessen the appeal of a law career across the board). Would she still send Igor to jail for five years? And when George W. Bush claimed that Iraq had “weapons of mass destruction”, his confidence appeared high, but what if he had been told that, should his accusation prove without merit, he would have to resign as US president? Would he still have invaded as eagerly as he did? For key decisions –in law and in politics– all parties concerned deserve to be given a numerical indication of the confidence that underpinned that decision.

This raises another problem: just like judges and politicians, researchers may overstate their confidence in a claim. To truly assess their confidence, something needs to be on the line. In 1971, Eric Minturn made a refreshing proposal1:

“The problem is, of course, to measure the confidence the investigator really has in his findings. Clearly he is aware of far more than his

value reflects. To mathematically assess the total value he places on his results, I suggest a ‘Wagers’ section in publications wherein the author simply attaches monetary significance (numbers!) to the results’ repeatability. Wagers could be taken through journal editors who could take a percentage of the bet to help lower publishing costs. By convention, failures to replicate would win the wager. `Putting your money where your

is’ would enable measures of highly replicable triviality (high wagers not taken), theory untestability (low wagers, few bets), spurious results (many wagers lost), heuristic value (low wagers, many takers), etc. Research funds would go to the best investigators. Replication would be encouraged. Graduate students would have a new source of money. Hypocrisy would be unmasked. Best of all, whether or not wagers were taken, psychologists would have a numerical foundation to supplant the current reliance on fallible and potentially fraudulent human judgment. One can also foresee inflated and depressed theoreticians as well as theories, bullish and bearish research markets, bluffing effects, reviewers selling their topics short, accusations of psychofiscal irresponsibility, etc., but these are small prices to pay for rigor and only make explicit the present state of affairs.” (Minturn, 1971, p. 669)

If betting money is deemed insufficiently dignified, one may follow the suggestion from Hofstee (1984) and bet units of “scientific reputation”. There is much more to be said about solving the problem of scientific overconfidence, but the De Morgan-Minturn approach appears to be a useful starting point for further discussion and exploration.

Footnotes

1 We discovered the reference to the Minturn paper in the highly recommended book by Theodore Barber (1976).

References

Barber, T. X. (1976). Pitfalls in Human Research: Ten Pivotal Points. New York: Pergamon Press Inc.

De Morgan, A. (1847/2003). Formal Logic: The Calculus of Inference, Necessary and Probable. Honolulu: University Press of the Pacific.

Hofstee, W. K. B. (1984). Methodological decision rules as research policies: A betting reconstruction of empirical research. Acta Psychologica, 56, 93-109.

Minturn, E. B. (1971). A proposal of significance. American Psychologist, 26, 669-670.

Zabell. S. (2012). De Morgan and Laplace: A tale of two cities. Electronic Journal for History of Probability and Statistics, 8. Available at http://emis.ams.org/journals/JEHPS/decembre2012/Zabell.pdf.

About The Authors

Eric-Jan Wagenmakers

Eric-Jan (EJ) Wagenmakers is professor at the Psychological Methods Group at the University of Amsterdam.

Fabian Dablander

Fabian is a PhD candidate at the Psychological Methods Group of the University of Amsterdam. You can find him on Twitter @fdabl.

Sophia Crüwell

Sophia is a Research Master’s student at the University of Amsterdam, majoring in Psychological Methods and Statistics. You can find her on Twitter @cruwelli.